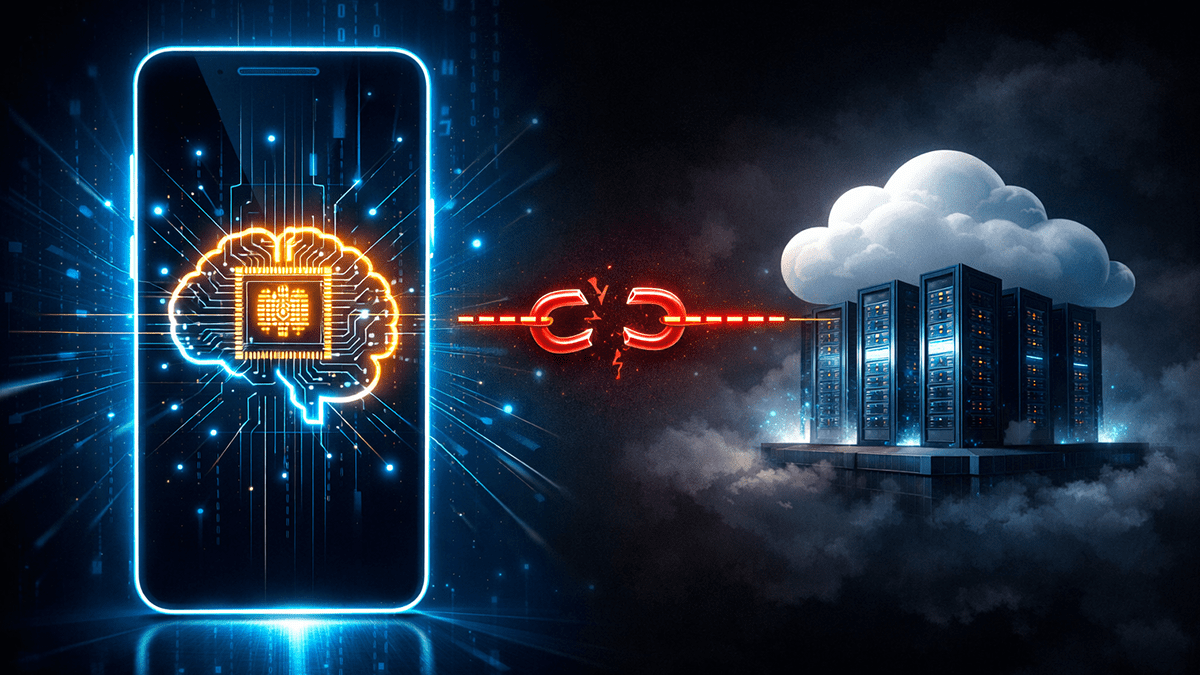

The age of “Cloud Computing” has defined the past decade. Every time you asked Siri a question, used Google Lens to translate a menu, or created an image with Midjourney, your data had to travel hundreds of miles to a massive server farm, get processed, and return to your screen. It felt like magic—but it came with trade-offs: latency, privacy concerns, and reliance on an internet connection.

Now, in 2025 and 2026, things are shifting. We are entering the era of Edge AI.

This isn’t just a minor upgrade for the iPhone 17 or Samsung Galaxy S26—it’s a fundamental change in how computers “think.” Edge AI brings the brain out of the cloud and places it directly in your pocket, on your wrist, and in your car.

In this guide, we’ll cut through the hype, explore the NPU revolution, and show why the most powerful AI of the future will, essentially, run “offline.”

The Hardware Wars – NPU is the New GHz

For twenty years, tech enthusiasts debated clock speeds (GHz) and core counts. Those days are fading. By 2026, the key metric for flagship devices is TOPS (Trillions of Operations Per Second).

The push to bring AI to the edge has sparked a silicon arms race between giants like Apple, Qualcomm, MediaTek, and Intel.

Apple’s Neural Engine Strategy

Apple predicted this shift early, embedding the “Neural Engine” into A-series chips years ago. Today’s A19 Pro and M5 chips do more than accelerate FaceID—they run Small Language Models (SLMs) entirely on-device.

When your iPhone suggests a photo edit or summarizes an email, it isn’t asking OpenAI for help—it’s using local transformers. This enables deeply integrated “Apple Intelligence” features without the privacy risk of sending your data to a server.

Qualcomm and the Snapdragon Revolution

Qualcomm’s Snapdragon 8 Elite chips pack NPUs capable of 75+ TOPS—enough raw power to run Generative AI (like Stable Diffusion) on an Android phone in under a second.

That’s why the “AI Phone” hype of 2026 isn’t just marketing. The hardware finally matches the software’s ambitions.

The “Laptop AI-PC” Era

This isn’t just about phones. Microsoft and Intel’s “AI PC” standard requires laptops with a dedicated NPU. Microsoft Copilot is moving from a web-based chatbot to a system-level OS layer. Without an NPU, your battery would drain in an hour running AI tasks on the CPU. With an NPU, it handles them efficiently while organizing files or transcribing meetings in real-time.

The Killer Feature – Security and Privacy

This isn’t just about phones. Microsoft and Intel’s “AI PC” standard requires laptops with a dedicated NPU. Microsoft Copilot is moving from a web-based chatbot to a system-level OS layer. Without an NPU, your battery would drain in an hour running AI tasks on the CPU. With an NPU, it handles them efficiently while organizing files or transcribing meetings in real-time.

Health Data

A smartwatch monitoring heart rhythm:

- Cloud: Data uploads to a server. If hacked, your medical history is exposed.

- Edge: Data is analyzed locally. Alerts are sent only if needed, and raw data remains encrypted on your wrist.

Corporate Secrets

A smartwatch monitoring heart rhythm:

- Cloud: Data uploads to a server. If hacked, your medical history is exposed.

- Edge: Data is analyzed locally. Alerts are sent only if needed, and raw data remains encrypted on your wrist.

Smart Home Cameras

Edge AI cameras (like those using “Matter”) process video locally. They detect faces or packages and send only notifications, keeping footage private and preventing cloud leaks.

The Latency Advantage – Why 5G Was Not Enough

5G promised speed, but physics has limits. Even 20 milliseconds of latency is too much for critical tasks.

Imagine a child running into the street:

- Cloud scenario: Camera sends video to the cloud -> AI decides “Brake!” -> Command returns. Network delays or tunnels could cause disaster.

- Edge scenario: Car’s onboard AI brakes instantly. No network needed.

AR & VR

For AR glasses to replace smartphones, graphics must update in under 10 milliseconds to avoid motion sickness. Cloud processing can’t keep up. Only Edge AI can handle real-time rendering locally.

Edge AI isn’t just a tech upgrade—it’s the start of a new computing era where speed, privacy, and intelligence coexist directly on your devices.

The “Small” Revolution – SLMs Vs LLMs

For the past few years, the AI development slogan was “Bigger is Better.” GPT-4 is bigger than GPT-3; Gemini Ultra is bigger than Pro. These Large Language Models (LLMs) have trillions of parameters. They are geniuses, but they are heavy. To make Edge AI possible, developers had to perform a magic trick to make AI smaller without making it stupider. This is the beginning of the SLMs (Small Language Models) era.

The Science of “Model Distillation”

You have a genius professor (the LLM) who knows everything about everything. You don’t need that professor to teach a 1st-grade math class. You just need a bright student (the SLM) who learned everything from the professor.

Tech giants are now using huge cloud models to “teach” smaller models. This is called Distillation. It makes possible the creation of compact AI models (like Google’s Gemini Nano, Microsoft’s Phi-3, or Meta’s Llama 3 8B) that are specifically designed to run on your phone’s NPU.

Why SLMs are Better for You

You have a genius professor (the LLM) who knows everything about everything. You don’t need that professor to teach a 1st-grade math class. You just need a bright student (the SLM) who learned everything from the professor.

Tech giants are now using huge cloud models to “teach” smaller models. This is called Distillation. It makes possible the creation of compact AI models (like Google’s Gemini Nano, Microsoft’s Phi-3, or Meta’s Llama 3 8B) that are specifically designed to run on your phone’s NPU.

Context Awareness: The SLM running on your phone knows you. It knows your calendar, your contacts, and your writing style. The cloud model doesn’t know you unless you upload that information.

Specialization: Instead of having one huge brain, your phone will probably have a group of specialists. One tiny model for photo editing. Another for text summarization. Another for voice translation.

Offline Capability: You can write a novel, edit a 4K video, or organize your whole finance spreadsheet on a plane with no Wi-Fi, using AI assistance that is just as good as the cloud.

The Battery Paradox. Does AI kill Your Phone?

Battery life is one of the biggest concerns for users. “If my phone is doing all this heavy thinking, won’t the battery die in two hours?”

The irony is that Edge AI could actually increase the battery life of your phone.

The reason for this has to do with the “Energy Cost per Bit.”

The most energy-intensive thing a smartphone can do is use the 5G modem. This is because keeping the connection open, pinging the tower, sending data, and receiving the response takes a huge amount of milliamp-hours (mAh).

The Battery Paradox. Does AI kill Your Phone?

The NPU is optimized for one thing and one thing only: matrix multiplication (the math of AI). It is highly efficient at this.

Scenario: You want to get rid of a photobomber in a photo.

Cloud Method: Your phone wakes up the 5G modem (High Power), sends the photo (High Power), waits (Idle Power), receives the photo (High Power).

Edge Method: The NPU wakes up for 0.5 seconds (Medium Power), removes the photobomber from the photo, and goes back to sleep. The 5G modem never wakes up.

By doing this, we avoid the “Transmission Penalty.” When chips shrink to 2nm and 1.8nm in 2026/2027, the difference will be even more extreme. Your “AI Phone” will last longer than your “Dumb Phone.”

The Green Angle

We simply cannot speak about technology in the 2020s without mentioning sustainability. The dirty secret about Generative AI is that Data Centers are energy vampires. They use gigawatts of power and millions of gallons of water for cooling.

Each time you ask ChatGPT a joke, a server is provisioned somewhere. Scale this by billions of users, and the carbon impact is simply mind-boggling.

Distributed Compute

Edge AI relieves this problem. When 1 billion iPhone users compute their AI on the device, that is 1 billion computations that do not need to occur on a data center. It shifts the energy usage to the devices we are already charging, rather than building new coal or gas plants to power new server farms.

Future Predictions – How Does 2030 Look Like

If 2025 is the year of adoption, what does the next five years look like? From current patent filings and research papers, this is where Edge AI is headed.

1. The End of “Apps”:

We are currently living in an app-driven world. You open Uber to get a ride. You open OpenTable to book a dinner. By 2030, Edge AI agents will make apps disappear. You will just tell your phone: “Book me a table at a calm Italian restaurant for 7 PM and pick me up there.” The on-device AI will communicate with the APIs of these services in the background. You will never see the app interface. Your phone will become a real “Digital Concierge.”

2. Hybrid AI (The Best of Both Worlds):

We will not completely ditch the Cloud. We will adopt a Hybrid Model.

Tier 1 (Edge): Your phone will solve 90% of queries (texts, simple queries, navigation).

Tier 2 (Cloud): If you ask a question that is too complex for your phone (e.g., “Design a cure for cancer”), your phone will automatically refer the question to the Cloud, receive the answer, and display it to you. This process will be seamless.

3. Emotional Intelligence:

Future NPUs will enable your devices to analyze not only the words you say but also how you say them. Your phone will recognize the stress in your voice and may automatically turn on “Do Not Disturb” or recommend soothing music. This is the beginning of “Affective Computing.”

FAQs

What is the difference between Edge AI and Cloud AI?

Cloud AI processes data on remote servers requiring an internet connection (like ChatGPT). Edge AI processes data locally on your device (smartphone, car, or watch), offering faster speeds, better privacy, and offline capabilities.

Do I need a new phone to use Edge AI?

Likely, yes. While older phones can run very basic AI, true Edge AI requires a processor with a dedicated NPU (Neural Processing Unit), such as the Apple A17 Pro/A18 or Qualcomm Snapdragon 8 Gen 3/4 and newer.

Is Edge AI safe for privacy?

Yes, it is generally safer than Cloud AI. Since the processing happens on your device, your personal data (photos, messages, health stats) does not need to be uploaded to a company’s server, reducing the risk of data breaches.